Last week I wrote about some of the questions which I think inform a more nuanced and meaningful discussion about how we should think about clinical governance of AI technologies in healthcare. As promised, this follow up piece moves from questions to architecture and the ‘how to‘. One of the key messages last time (I hope!) was that we already have the tools and frameworks that we need to do this. Our challenge is not to invent new structures and frameworks, but to apply the skills we have against this new technology.

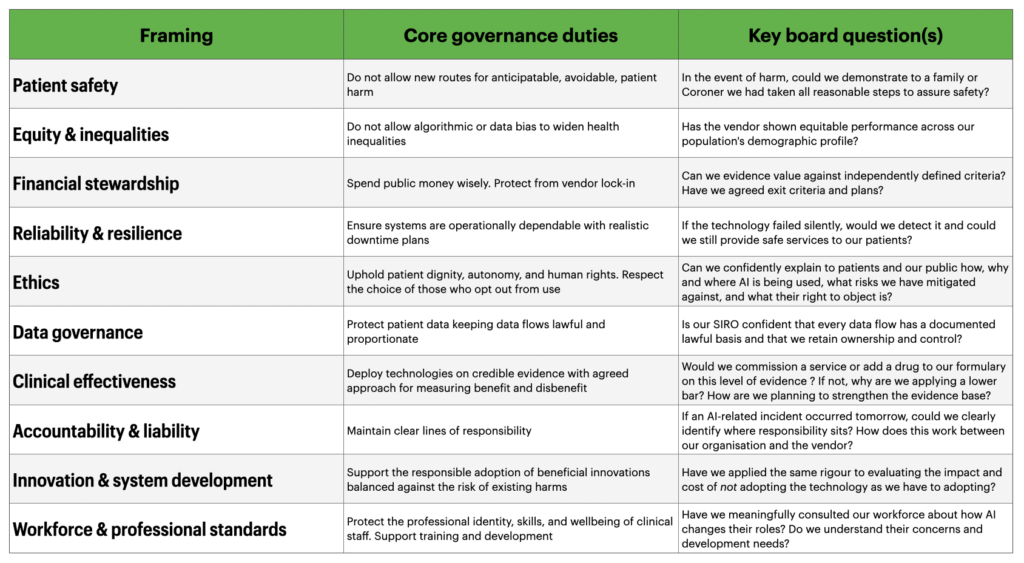

To answer the question of how we might do this, we first need to ask ourselves what we want our governance to deliver. Here, we have several duties to consider in order to address the key questions our Boards need to be assured about. Below are my suggestions for these. Importantly, I am not trying to claim that failing in any one of these areas is the most dangerous mistake we can make. The greatest mistake would be not allowing our clinical governance structures to recognise that a framework like this exists, leading to the adoption of technology without proper scrutiny.

Hopefully anyone who reads this table will immediately think “This is nothing new…”. And that’s the exact point. All of these framings are descended from, or are direct applications of, established models of clinical governance.

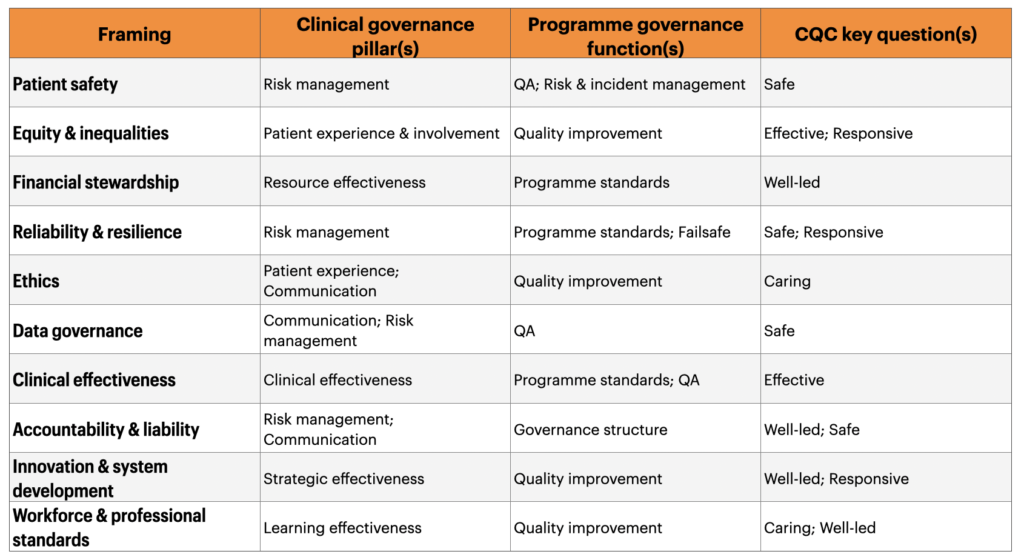

- The seven pillars of clinical governance set out by Scally and Donaldson map neatly onto these ten framings.

- So does the approach for linking clinical governance with oversight of larger programmes such as screening (programme standards, quality assurance, quality improvement, and risk and incident management). Here the parallels between with AI-enabled pathways are especially easy to find. Both are protocol-driven, applied at a population-level, algorithmically structured, and depend on consistent performance across diverse settings.

- Finally it’s reasonable (and sensible) to reflect these framings against The CQC’s five key questions (Safe, Effective, Caring, Responsive, Well-led) as the inspection lenses against which we are all assessed.

These links and mappings matter because they provide a clear counter to the position that “AI needs its own governance”. Again, the duties we have are not new – but the technical complexity of discharging them may be.

Mapping to these established models validates the suggestion that we don’t need new governance to safely steward AI. However, what happens if we think about our duties against the newer, AI-specific frameworks that have been proposed.

Where newer frameworks fit, and where they don’t…

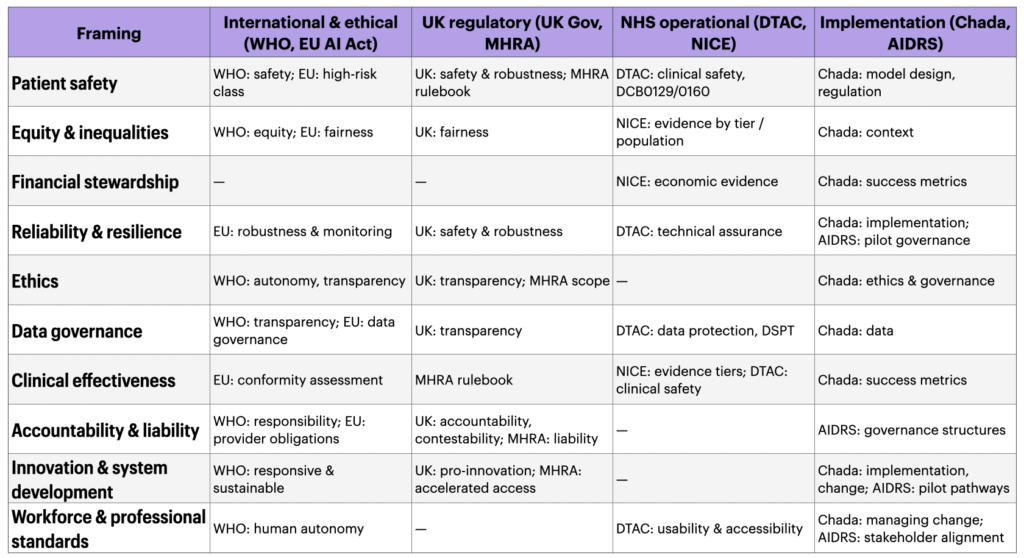

The table above could be seen as an argument to cling to the past in the face of a rapidly developing landscape of AI-specific governance models. And there are lots of these…

- The WHO Ethics and Governance of AI for Health guidance (updated in 2024 in relation to large multi-modal models) sets out six ethical principles for AI in healthcare.

- The UK Government’s five pro-innovation principles signal a national regulatory direction of travel, with the MHRA National Commission on AI in Healthcare due to report later this year. These essentially give us a regulatory rulebook.

- A few months ago DTAC was refreshed to update the operational baseline for technologies and the NICE Evidence Standards Framework defines one evidential bar we can apply.

- The EU AI Act provides an international benchmark, classifying most healthcare AI as high-risk, and Chada et al.’s 2022 eight-considerations framework offers a more commissioner-focused lens.

However, when you map these new tools to the 10 governance framings above, the critical thing isn’t what they cover, but what they miss.

This is the most critical thing to think about in more detail – where the gaps matter more than the coverange:

- Financial stewardship has almost no reference. No AI-specific governance model requires the demonstration of value for public money in the way standard NHS financial governance would.

- Ethics and accountability are not meaningfully covered by an operational tool such as DTAC.

- Workforce issues and concerns are barely addressed by any formal AI framework.

- Innovation as a duty, the counterbalancing obligation to adopt beneficial technology has no operational tool at all. DTAC is a gatekeeping instrument designed to prevent harms from poor technology. It is not designed to accelerate adoption of good technology nor to hold organisations accountable for failure to innovate.

This is the argument for integrating the ten framings through our clincial governance architecture. No individual external model is sufficient. Each covers some duties but not all.

Bringing this all together

If you’ve made it this far through one or both of these pieces, thank you! To sum up my central argument, in one closing pitch:

Good clincial governance of AI looks remarkably like the good governance of everything else in healthcare. That shouldn’t be a surprise but it does seem to keep surprising people. These ten framings and their mappings are not a compliance checklist, but they are a posture we can take to help us effectively use the experience, knowledge, tools and skills we already have. They can let our organisations hold a coherent view of what we are trying to deliver for our patients and how they need to adapt. Governance should be a design partner, not an overseer.

None of this is radical. Most of it is already written down somewhere. What’s harder, and what I think we require, is the willingness to hold it all together rather than carving off AI governance into its own underweight silo or letting it swell into a bureaucracy that blocks the thing it’s meant to enable.

We have the frameworks, we know our duties and we have the regulators. What we need to build are the skills and the confidence to govern AI as we govern everything else. With rigour, proportion, humility and clarity of purpose to maintain a focus on the people we want to help. This is governance in the foreground, not safety in the white space. The steering whell, not the brake.